"Beyond Words" transforms the iconic telephone booth, historically a symbol of public linguistic exchange, into a microscale perceptual environment for exploring non-verbal communication through heart rhythms. The project was exhibited at the 10th Hong Kong–Shenzhen Bi-City Biennale of Urbanism/Architecture (UABB) at Hetao, Shenzhen, and developed in collaboration with Prof. Mirna Zordan at the Future Spaces Vision Lab.

Project Overview

Interpersonal communication is often mediated through symbolic forms of language and text that distance interaction from bodily experience. We present "Beyond Words", an interactive installation that explores cardiac rhythms as material for body-based communication. Two participants enter connected booths where their pulse signals are shared and translated into a responsive audiovisual environment.

Set Up

Synchronization Stage

Beyond reciprocal exchange, the system introduces a synchronization interaction in which moments of heart-rate matching trigger amplified visual transformations to examine how visible convergence shapes perceived connection. Through a pilot study and qualitative analysis, we investigate user experience and how cardiac rhythms are interpreted as communicative cues. This work offers early reflections on designing biosensing systems for embodied interpersonal communication.

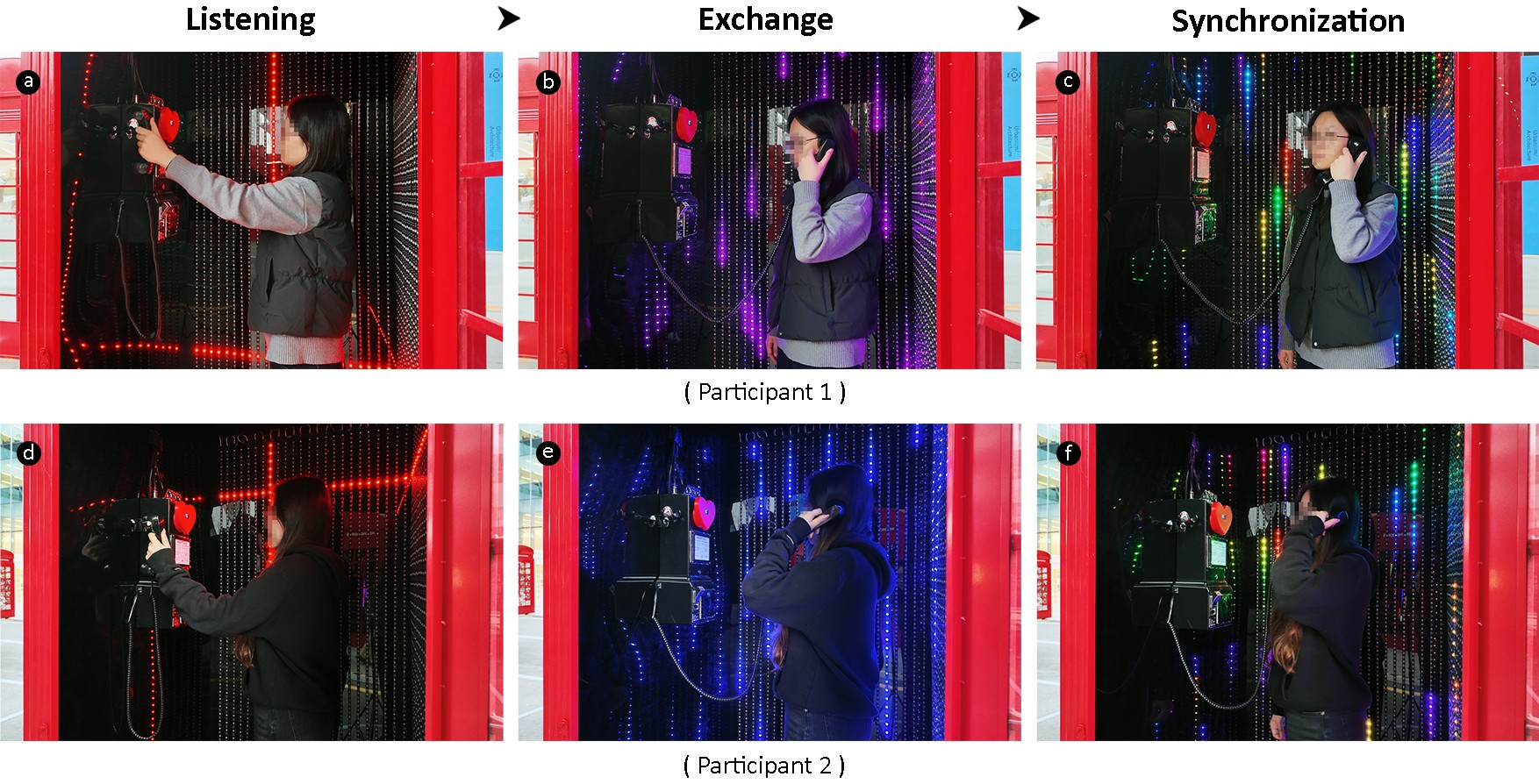

Three Interaction Stages

● Listening: When participants enter the booth and pick up the handset, audio instructions from the phone guide them to place a finger on the pulse sensor and remain still. Red scanning lines move across the LED panels, indicating the system is awaiting connection.

● Exchange: Once a stable pulse is detected, each participant's BPM appears on the OLED display and transmits to the opposite booth. The pulse drives a heartbeat sound and rain effect: faster BPM produces quicker audio and denser, faster-falling raindrops. One booth visualizes rain in blue, the other in purple.

● Synchronization: When both BPM values remain within a defined proximity range for five seconds, the rain shifts into rainbow colors and begins rising upward instead of falling, signaling a moment of synchrony between participants’ heart rates. The OLED display indicates this state as “matched,” while the heartbeat audio remains unchanged.

● Exchange: Once a stable pulse is detected, each participant's BPM appears on the OLED display and transmits to the opposite booth. The pulse drives a heartbeat sound and rain effect: faster BPM produces quicker audio and denser, faster-falling raindrops. One booth visualizes rain in blue, the other in purple.

● Synchronization: When both BPM values remain within a defined proximity range for five seconds, the rain shifts into rainbow colors and begins rising upward instead of falling, signaling a moment of synchrony between participants’ heart rates. The OLED display indicates this state as “matched,” while the heartbeat audio remains unchanged.

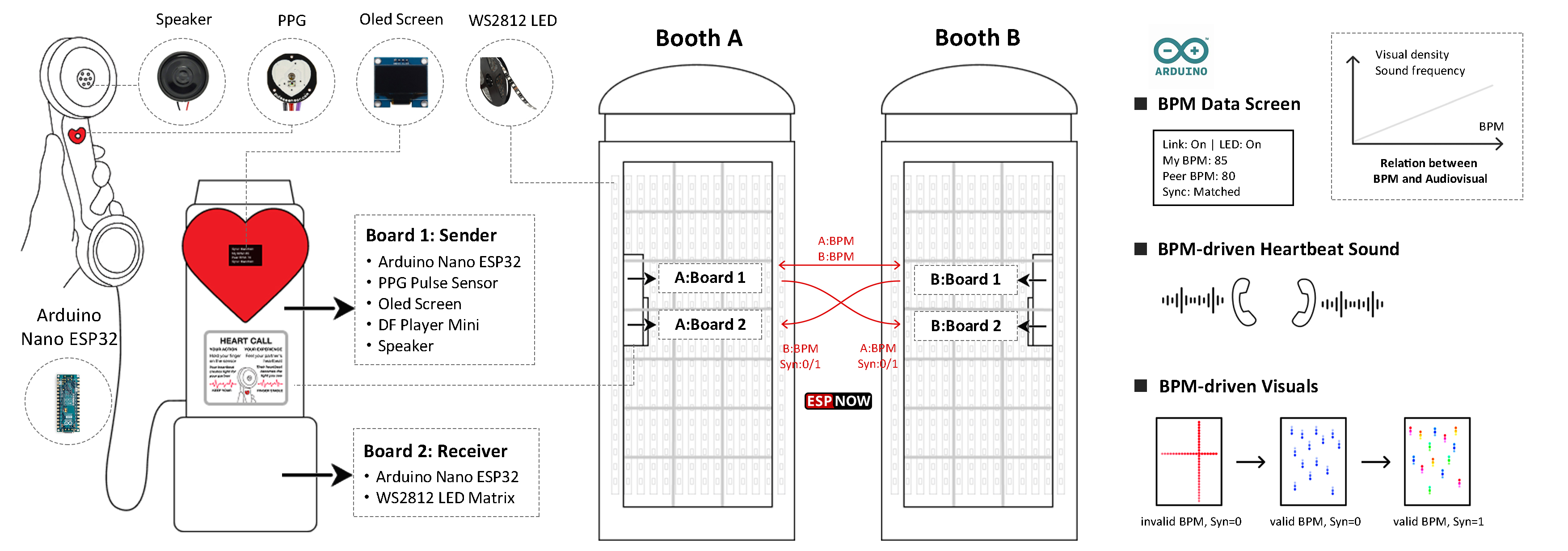

Technical Implementation

The system uses two Arduino Nano ESP32 microcontrollers per booth. A sender board handles pulse sensing, audio output, and data transmission via ESP-NOW. A separate receiver board drives the WS2812 LED matrices to avoid timing conflicts. Inside each vintage handset, a PPG sensor detects pulse while a speaker outputs heartbeat audio. The receiver board processes incoming heart rate data and synchronization states to generate the rain and color-shift effects across reflective black panels, visually expanding the confined space.

System Diagram and Data Flow

Prototyping Process

Exploratory Findings

A pilot study was conducted with six participants. After a 10-minute interaction with the installation, participants completed an emotional journey map and a semi-structured interview. Findings suggest that pulse rhythm was perceived as an authentic, universal, and effective communicative cue. While synchronization emerged as the most salient and emotionally resonant moment, participants also expressed a desire for deeper and more controllable forms of shared engagement beyond momentary alignment. These insights highlight both the communicative potential of physiological signals and the importance of interaction depth, feedback clarity, and sensing stability in shaping meaningful embodied experiences. Future work will refine the interaction design to support richer shared actions, extend engagement over longer durations, and improve sensing and feedback mechanisms to further explore alternative pathways for embodied communication.